Leading the AI transformation of your company

Prof. Gregory LaBlanc, Lecturer, Haas School of Business and Berkeley Law

Watch Now

15:13 Minutes The average reading duration of this insightful report.

Technology both improves and harms environmental sustainability. It’s is a double edged sword. While emerging technologies such as Al, loT, AR/ VR and others are being used to help achieve sustainability goals, these technologies when put into mainstream adoption will leave a hefty environmental footprint.

Explore a sneak peek of the full content

If we continue on our current trajectory, datacenters will leave a huge footprint on our planet. Data centers contribution to Co2 Emissions will go up 12X by 2030 and their consumption of water will go up 17X in the same time. Needless to say, they will be huge contributors to E-waste in landfills.

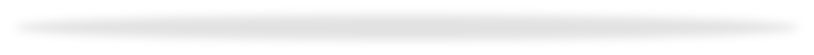

We have developed a framework based on the IT lifecycle consisting of a stack of building blocks. We believe this framework will help facilitate a comprehensive and structured implementation of a Sustainability strategy within the data center.It is quite intuitive and easy to identify potential actions that can be taken and what their impact would be. Download Complete Research

As organizations move with sustainable strategies, we highlight key insights that will help drive these strategies. We also identify metrics that can be used to measure progress.

We have painstakingly built a 5-level maturity model that can be used as a guideline to assess where the organization stands. We also give insights on what would be required to move to the next level.

We finally put this all together to see how organizations should approach their Sustainability journey.

Credits

Author@lab45: Hussain S Nayak, Sujay Shivram, Chandan Jha

Author@Wipro: Susan Kenniston

14:37 Minutes The average duration of a captivating reports.

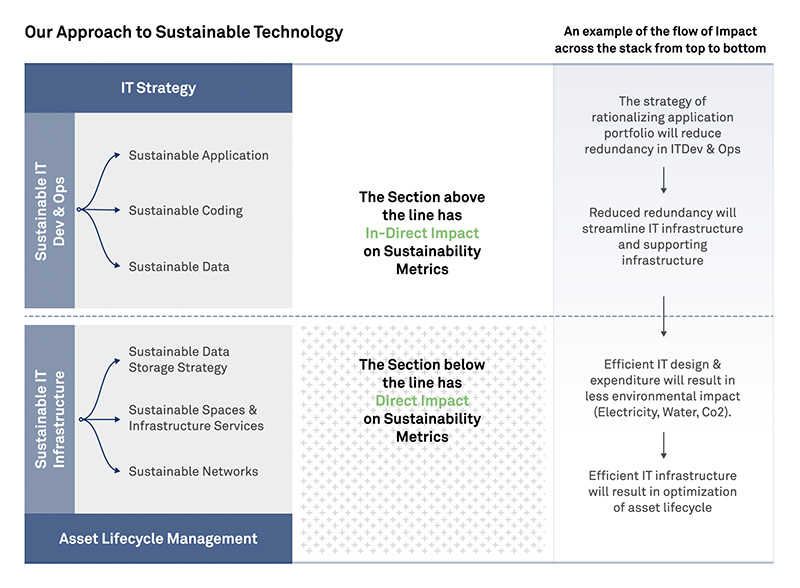

Decentralized Identity systems solve for inefficiencies and security breaches, making them extremely useful for enterprises. We explore in detail important industry use cases where these solutions can be used and means to implement them.

The KYC Process in Banks and Financial institutions is mandated by the government and can be quite painful both for the bank and the customers. We examine how DID can help not only simplify the process but also ensure high trust and make the process fraud proof, by eliminating intermediaries and returning to trusted direct relationships. Download Complete Research

In case of Food supply the application of IoT can provide real-time data and insights. The current IoT supply chain and the food Supply chain face innumerable challenges. Using DID and verifiable credentials in food/perishable supply chain can provide a tamper-proof and auditable record of a product’s journey, from its origin to its destination. Solution will have lasting impact not only for controlling quality and expense for organization but will also have impact on public nutrition, health, and sustainability. We explore how!

While managing Electronic Health Records, healthcare organizations face two main challenges: Privacy & Security and Interoperability due to multiple systems in play. By providing patients with greater control over their health information, Decentralized Identity solutions can enhance trust and confidence in the healthcare system, leading to better health outcomes. We explore the details with an example. Download Complete Research

Credits

Author@lab45: Sujay Shivram, Abhigyan Malik

17:41 Minutes The average duration of a captivating reports.

Healthcare transforms with a focus on accessibility, prioritizing IT, the global market is projected at USD 975 billion by 2027. AI and machine learning, expected in 90% of US hospitals by 2025, streamline chronic condition diagnoses. Emerging technologies drive change, influencing preventive and home care in the healthcare landscape.

Healthcare IT is a top priority for providers. Nearly 80% of healthcare providers consider it one of their top 5 strategic priorities, with investments in software including revenue cycle management, security and privacy, patient intake/flow, clinical systems, and telehealth. AI, ML, and IoMT are rapidly developing and expected to be used in 90% of US hospitals by 2025. The global mHealth apps market is growing, primarily driven by the adoption of fitness and medical apps. Technology can improve patient care, reduce medical errors, and expand hospital boundaries. However, data interoperability and regulations are necessary, and patient engagement is crucial for a better healthcare system. Download Complete Research

Empowering customers through GenAI

Credits

Lead Authors@lab45: Anju James

Contributing Authors@lab45: Hussain S Nayak

This is your invitation to become an integral part of our Think Tank community. Co-create with us to bring diverse perspectives and enrich our pool of collective wisdom. Your insights could be the spark that ignites transformative conversations.

Learn MoreKey Speakers

Thank you for subscribing!!!